Real-time Site Scanning for Improving Workplace Efficiency

Highlight

The way sites are often administered involves supervisors performing visual checks of work progress and inventory levels or maintenance management at social infrastructure facilities. Meanwhile, factors such as the shrinking workforce and operational rationalization are driving increased demand for the ability to make quantitative checks and to verify what is happening at the site remotely and in real time. In response, Hitachi Industry & Control Solutions, Ltd. has integrated new 3D technologies with AI image analysis technology from its surveillance camera business to develop technology for digital twins that supports frontline workers, providing the ability to view a site from any angle in real time and to perform measurements and counts.

This article describes this technology and how Hitachi plans to deploy it for customers in the construction and industrial sectors.

1. Introduction

Many worksites still require frontline workers to go to the site and perform visual checks for tasks such as monitoring work progress or accepting delivery of supplies used in construction sites. However, there is strong demand from such sites for the ability to monitor what is happening in real time1).

Recognizing this situation, Hitachi Industry & Control Solutions, Ltd. has identified a need to reduce the work required to perform visual checks at large worksites for tasks such as monitoring work progress or performing safety checks. As a lot of time is spent making daily visits to work sites like these and walking around to see what is going on, there is strong demand for having access to an on-screen overview of the site. While this is currently done by having a drone overfly the site to shoot video, this approach is unsuitable for sites that want to make frequent checks on progress because real time monitoring of work progress requires a new drone flight to be launched each time. As worksites may be scattered across Japan, another issue is how to reduce the cost f to visit remote sites. Users also want to be able to determine the sizes, counts, and spatial arrangements of objects that appear in video images.

To satisfy these demands, Hitachi Industry & Control Solutions is developing a technology that it calls real time field scanning (RTFS).

2. Overview of New Technology

Figure 1—Examples of Conventional Video Surveillance Conventional practice has been to install cameras that provide a view of the site from fixed positions. This makes it difficult to change viewpoint, perform surveillance of the overall site, or determine the size and number of objects that appear in the images.

Conventional practice has been to install cameras that provide a view of the site from fixed positions. This makes it difficult to change viewpoint, perform surveillance of the overall site, or determine the size and number of objects that appear in the images.

Figure 1 shows how video surveillance has been done in the past. While the installation of cameras makes it possible to view the site remotely, there is also strong demand for surveillance of the overall site and for determining the size or number of objects that appear in the images, requirements that are difficult to satisfy given the fixed viewpoints of the cameras and the inability of their two-dimensional images to provide accurate size information.

As the new technology captures images from nearby objects of interest and allows users to shift the viewpoint by using three-dimensional (3D) digital data to present the scene from different angles in real time, it can perform measurements on any objects visible in the images.

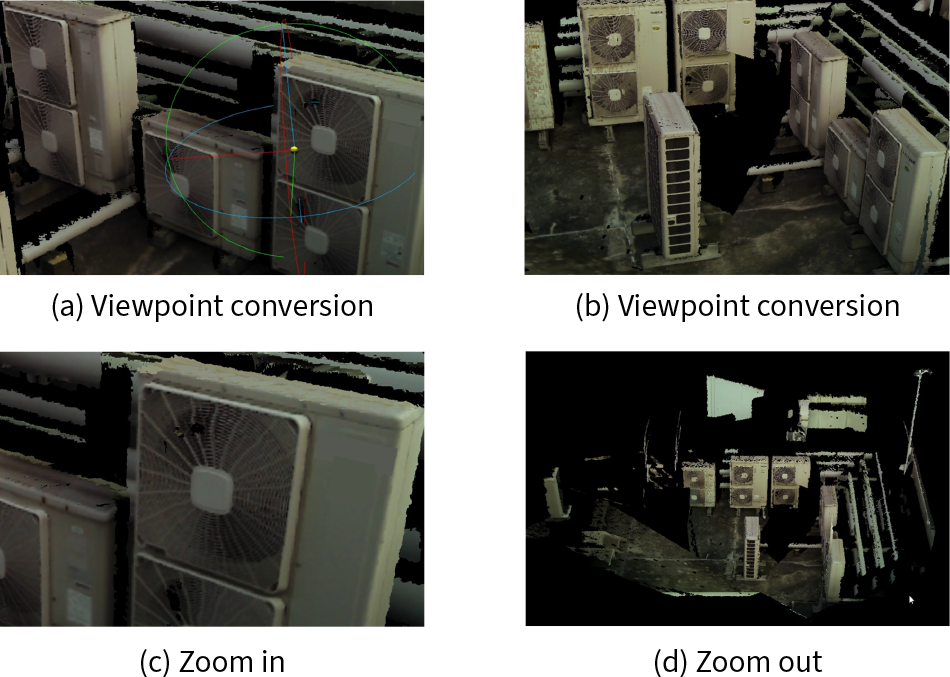

Figure 2 shows how viewpoint conversion is achieved. As it works by projecting images onto a 3D model, it allows users to view the scene however they want, thereby making worksite viewing more efficient. Available user operations include changing the viewpoint [images (a) and (b) in the figure], rotating the view and zooming in [image (c)], and zooming out [image (d)].

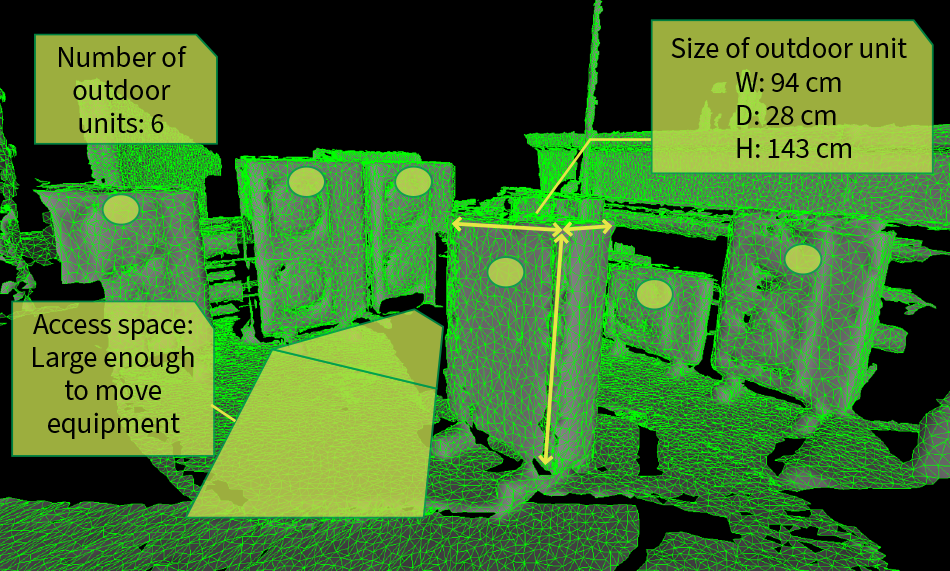

Figure 3 shows how object measurement is performed. Image recognition and other such technology are used to detect visible objects, and information is acquired that is useful for delivery acceptance, maintenance, and other tasks. This includes the size and number of objects and spatial arrangement such as the space available for access.

Figure 2—Examples of Viewpoint Conversion As the acquired data is in the form of a 3D model onto which images have been projected, users can see the scene in whatever way they want, including by changing the viewpoint as in (a) and (b), by rotating the view and zooming in as in (c), and by zooming out as in (d). This makes worksite viewing more efficient.

As the acquired data is in the form of a 3D model onto which images have been projected, users can see the scene in whatever way they want, including by changing the viewpoint as in (a) and (b), by rotating the view and zooming in as in (c), and by zooming out as in (d). This makes worksite viewing more efficient.

Figure 3—Example Object Measurement by Image Recognition with 3D Data The size of objects detected by image recognition can be measured using 3D data. Examples include identifying the outdoor units of an air conditioner from the detected objects and measuring their size using 3D data, using image recognition in tandem with the 3D data to obtain precise counts, or using the 3D information to measure the amount of access space available and simulating whether this provides enough room to move equipment.

The size of objects detected by image recognition can be measured using 3D data. Examples include identifying the outdoor units of an air conditioner from the detected objects and measuring their size using 3D data, using image recognition in tandem with the 3D data to obtain precise counts, or using the 3D information to measure the amount of access space available and simulating whether this provides enough room to move equipment.

2.1 System Overview

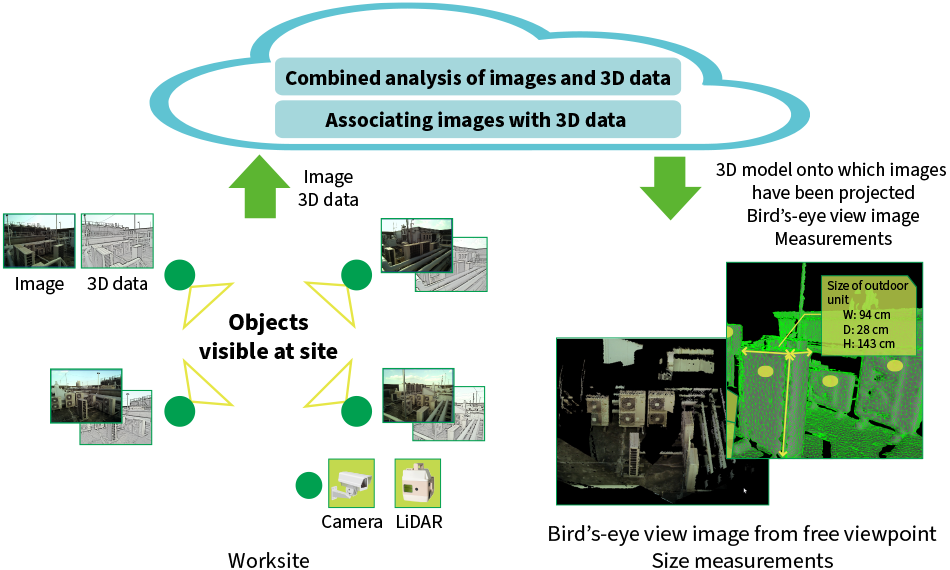

Figure 4—System Diagram LiDAR: light detection and rangingSurveillance cameras and LiDAR are installed at the user site close to the objects of interest and are operated simultaneously. The camera images and 3D LiDAR data are forwarded to the cloud where the positional relationship between them is calculated by associating them with one another. A combined analysis of the images and 3D data is used to perform measurements or to generate images from any viewpoint and the results are made available to the worksite.

LiDAR: light detection and rangingSurveillance cameras and LiDAR are installed at the user site close to the objects of interest and are operated simultaneously. The camera images and 3D LiDAR data are forwarded to the cloud where the positional relationship between them is calculated by associating them with one another. A combined analysis of the images and 3D data is used to perform measurements or to generate images from any viewpoint and the results are made available to the worksite.

Figure 4 shows a block diagram of the system. As shown on the bottom left, the imaging of objects of interest is performed from nearby using surveillance cameras and 3D sensor units [measurement instruments that use range-finding sensors such as light detection and ranging (LiDAR)]. LiDAR is a remote sensing technology that can acquire accurate distance measurements by using a laser beam to measure the distance to objects and to determine their shape. The images and 3D data are acquired by using these different sensors to perform sensing simultaneously and from close range.

The acquired images and 3D data are then sent to the cloud where their relative positions are determined by associating them with one another. The resulting information provides the basis for analysis technology that combines the images and 3D data to perform measurements or to display the scene from the user’s choice of viewpoint.

The data acquired by this means can be output in the form of measurement results, overview images, or as a 3D visual model with projected images that can be viewed from any viewpoint. These in turn can be distributed to workplaces where they can be viewed on-site using a tablet or integrated with customer systems.

The system is intended to provide measurements with centimeter accuracy and high-quality images that can be viewed from any viewpoint. The following sections describe two of its key technologies.

2.2 Associating 3D LiDAR Data with Camera Images

If the 3D data is to be used to perform accurate measurements on objects that appear in the images, it is first necessary to obtain an accurate determination of the correspondence between the images and the data. As the system is based on the use of 3D data and images obtained from different devices (such as existing surveillance cameras), an accurate correspondence must be determined using only the acquired images and data. Unfortunately, as these take the forms of visual and geometric information respectively, direct comparison is not possible.

Instead, Hitachi developed an associating technology that uses information about structural features that appear in both the images and 3D data to determine the correspondence. First, images from various perspectives are generated from the 3D data for the feature values of structural features. Next, information is likewise calculated for the structural features in the surveillance camera images. These are compared and the viewpoint that provides the strongest correlation between the 3D data and images is identified, thereby accurately determining the correspondence between them in a way that is not influenced by local factors.

2.3 Cleanup Technology

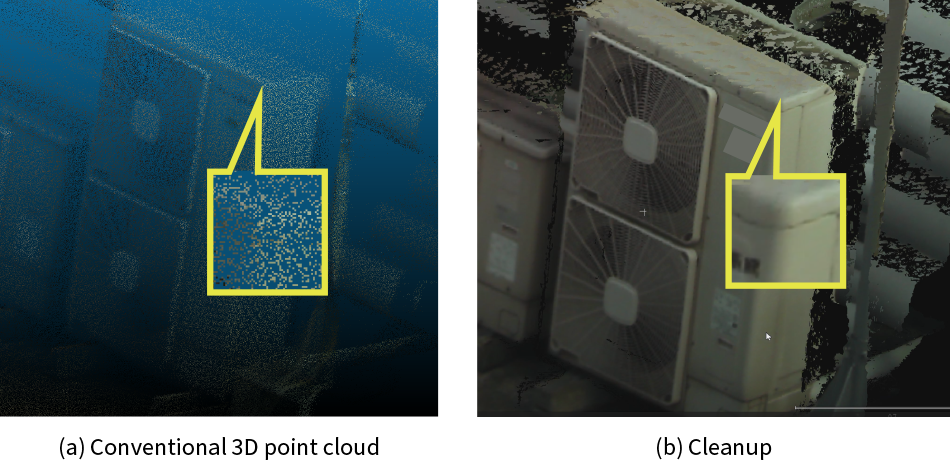

Figure 5—Cleanup Technology Hitachi has developed a technology for cleaning up data to provide high-resolution views of the site or highly accurate measurements. While conventional point clouds are unable to identify the edges of objects accurately due to their coarse resolution in such regions, the projection mapping technology used here for associating the image with the 3D model means that image information can be used to locate edges precisely. This improves the quality of the images generated from the chosen viewpoint and enables precise object measurement by accurately identifying the start and end points.

Hitachi has developed a technology for cleaning up data to provide high-resolution views of the site or highly accurate measurements. While conventional point clouds are unable to identify the edges of objects accurately due to their coarse resolution in such regions, the projection mapping technology used here for associating the image with the 3D model means that image information can be used to locate edges precisely. This improves the quality of the images generated from the chosen viewpoint and enables precise object measurement by accurately identifying the start and end points.

The primary method for object measurement in the past used 3D data acquired from a drone-mounted LiDAR sensor or similar. Typically, this involved using a point cloud made up of a large number of 3D data points. The difficulty with this, unfortunately, is that the low density of points makes edges and other such regions appear indistinct when enlarged. Given the difficulty of accurately specifying the start and end points for the object being measured [see Figure 5(a)], this leads to the issue that small variations can result in large measurement errors.

In response, Hitachi Industry & Control Solutions has developed a cleanup technology that addresses this issue This works by specifying the start and end points in the image rather than in the 3D data. These points are then projected onto the 3D data to perform the measurement. As images frequently provide a clear view of locations such as edges that make useful measurement points, this enables accurate measurements to be made.

The actual process involves determining the correspondence between the camera images and point clouds acquired from multiple 3D sensor units, as explained in section 2.2. In doing so, characteristic data such as information about the edges visible in the image is used to provide additional detail for the edges of structural features present in the point clouds. Utilizing their orientations relative to one another, a mesh* is generated from the point cloud acquired by each 3D sensor unit and these are merged into a single mesh. By using a perspective projection model derived based on the principles of camera operation, a calculation is performed to determine which image pixels correspond to points in the mesh. 3D modeling then uses this calculation for high-resolution projection mapping onto the merged mesh, enabling the generation of images from any viewpoint in which edges and other fine details are enhanced [see Figure 5(b)].

In addition to improving the quality of the images generated from the chosen viewpoint, this also allows for accurate measurement. Moreover, object measurement can be automated by applying object recognition artificial intelligence (AI) to the image to specify the start and end points.

- *

- Obtained by drawing edges between the vertices of objects. The mesh comprises the surfaces (polygons) enclosed by these edges.

3. Deployment Plans

This section describes how Hitachi intends to use this technology to track work progress at construction sites and current plans for deploying it as a software-as-a-service (SaaS).

Hitachi Industry & Control Solutions has drawn on the image analysis technology it has acquired from its work on developing large security surveillance camera systems to establish a cloud-based image analysis platform. Using this platform, it is currently in the process of rolling out a SaaS that provides users with numerous image analysis functions that have been standardized to address the challenges facing various industries and applications2). This is implemented in the cloud using the functions described above as assets, providing services to customers that have image analysis at their core.

There are a number of ways in which this service can be used to improve progress tracking at worksites.

The first is the remote viewing of entire worksites or other sites that cover a wide area. This can reduce the amount of time spent on traveling to sites and walking around them to check on progress. At large sites, LiDAR sensors and cameras are installed with network connections as required to cover the areas to be monitored. Images and scans are acquired as often as is needed for progress monitoring and these can be used to view the scene from any angle. As this is provided via the cloud, users at remote locations can view the site from their chosen viewpoint on a personal computer or tablet. The available uses include progress checks that compare the current situation with saved data from the past and the measurement of object size or height by specifying two points in an image. This can be used to determine whether the object seen in an image is the correct one or to identify hazards such as access ways that are too narrow, or materials that are piled too high.

4. Conclusions

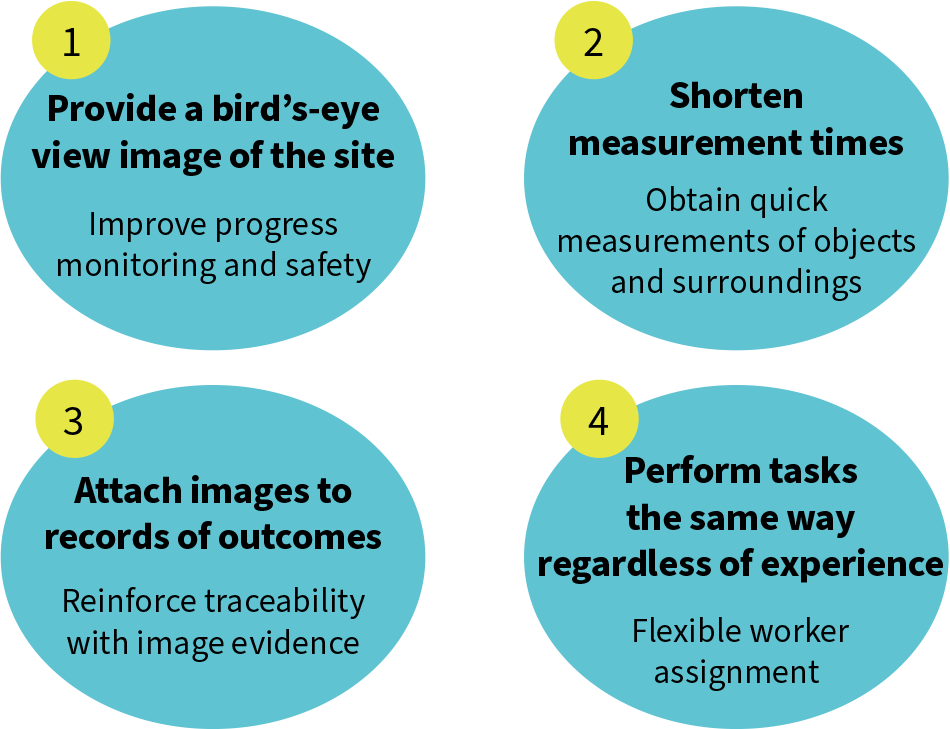

Figure 6—Benefits of RTFS Real-time field scanning (RTFS) can improve safety and the efficiency of measurement and worksite monitoring. As the images and 3D data can be archived, it can also improve traceability by recording evidence of work outcomes. Keeping a quantitative record of work outcomes can help to standardize tasks and ensure that they are done the same way regardless of the level of experience of the worker concerned.

Real-time field scanning (RTFS) can improve safety and the efficiency of measurement and worksite monitoring. As the images and 3D data can be archived, it can also improve traceability by recording evidence of work outcomes. Keeping a quantitative record of work outcomes can help to standardize tasks and ensure that they are done the same way regardless of the level of experience of the worker concerned.

This article has presented an overview of RTFS, its features, and how it will be deployed. Figure 6 lists the benefits that the service is intended to provide. RTFS will improve safety and the efficiency of worksite progress monitoring. In doing so, it should make the measurement of objects and their surroundings more efficient and improve traceability by collecting “evidence” in the form of images and 3D data on work outcomes. By providing a quantitative record of work outcomes, it can also help to standardize tasks and ensure that they are done the same way regardless of the level of experience of the worker concerned.

In addition to customers in the construction and equipment leasing businesses, Hitachi also has extensive future plans for deploying the technology and service in manufacturing. Potential applications being considered include managing and tracking the inventory of produced goods at production plants in the industrial sector, use in road management for parking lots and highways, and production checks and maintenance for large machinery. With use of the measurement technology also under consideration for applications such as water level or slope measurement, Hitachi intends to put it to good use resolving challenges across a wide range of sectors and workplaces.

REFERENCES

- 1)

- Mamoru Abe, “Strategy Design for Reform and Improvement: Construction DX,”

Shuwa System (Jan. 2021) in Japanese. - 2)

- Video Analytics Service to Boost Customers’ Operational Efficiency, Hitachi Review (Jan. 2024)